Documents Image Quality Assessment Part -2

Aditya Mangal | Insurance Samadhan | Shivpoojan Saini

Welcome back, dear reader! In Part-1, we dipped our toes into the exciting world of Image Quality Assessment and made some impressive progress using classification and regression models. However, like a master chef constantly seeking to refine their signature dish, we’re not quite satisfied yet. Our goal demands more accuracy, more precision, more… well, more everything! So in Part-2, we’re taking our exploration to the next level by delving into the world of OCR’s recognition model. This technology has the potential to provide us with the kind of granular detail and precision that our use case demands. So let’s roll up our sleeves, put on our thinking caps, and see where this rabbit hole leads us! But first, if you haven’t checked out Part-1 yet, what are you waiting for? 🤔🤔 Don’t be left behind — catch up now and join us on this exciting journey.

Thanks to MMOCR for creating such a wonderful toolbox.MMOCR is an open-source toolbox based on PyTorch and mmdetection for text detection, text recognition, and the corresponding downstream tasks including key information extraction. It is part of the OpenMMLab project.

To set up the MMOCR, you can refer to its documentation which is written very well.

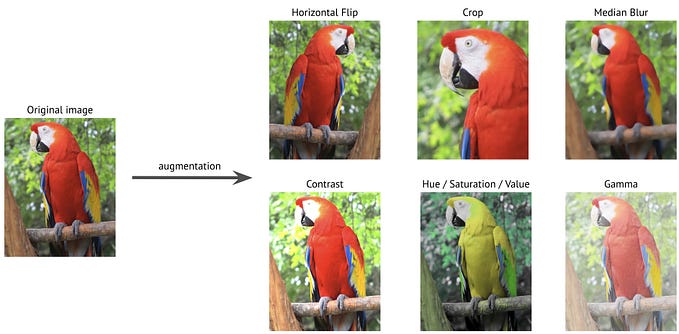

We have tested recognition methods on sample data (20k) of MJSynth dataset and analyzed the results with the actual text that comes in MJSynth datasets. So we can conclude which recognition is working well with the word images dataset.

Let’s take a look at the code to recognize the word image and its output.

Import the libraries that will be used in the code

from mmocr.ocr import MMOCRMake an object of MMOCR Class

model_name = 'ABINet'

ocr = MMOCR(recog=model_name)Read text image

image = cv2.imread('sample.png')Recognize the text image with ABINet Recognition Model

result = ocr.readtext(image)Output

print(result)

plt.imshow(image)

plt.show()

For every image, we are interested in the corresponding confidence score of the text image. Now, we can compare the recognition methods with our sample dataset(20k) from MJSynth dataset.

We have calculated correct and incorrect by comparing the predicted text with the actual text (compare with the lower case of text of both actual and predicted).

Here, we can conclude that ABInet is working well on the word images dataset. Now, we can tweak the architecture of ABINet and make the model for our use case of Image Quality Assessment. If you forgot to read Part-1, go and check it out to get an insight into the complete story of Image Quality Assessment.